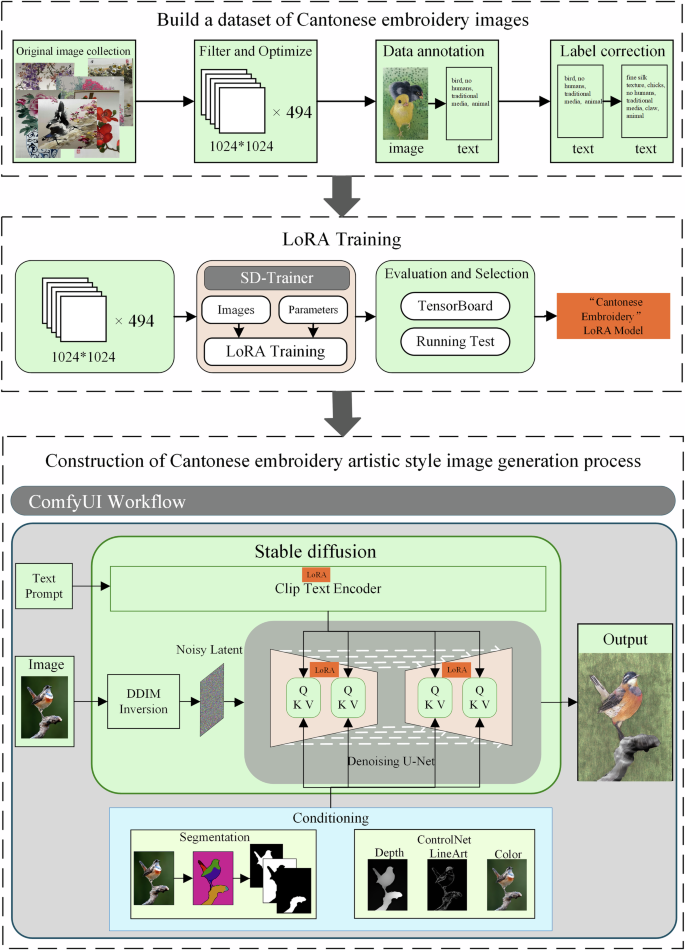

This study constructed a complete image generation process for the artistic style of Cantonese embroidery based on Stable Diffusion, with the workflow presented in Fig. 3. First, a dataset of Cantonese embroidery images with semantic labels was established. Based on this dataset, the adapter gx_lora3.safetensors incorporating the texture features of Cantonese embroidery was trained using LoRA low-rank adaptation technology. The trained LoRA model was then applied to the Stable Diffusion CLIP and UNet layers, enabling the model to generate semantic content with the texture characteristics of Cantonese embroidery. All components were integrated into the ComfyUI40 platform, where multiple conditions were designed to guide the generation process. Specifically, the SAM model was integrated to perform semantic segmentation on the input images, generating precise masks for different regions. During the denoising process of Stable Diffusion, these masks served as constraints to guide the generation of semantic content in each region. At the same time, ControlNet was introduced. By applying constraints of depth, LineArt, and color, the geometric structure and color gamut distribution of the generated images were preserved consistently with the original images. This solution fully leveraged the modular pipeline advantages of ComfyUI, achieving collaborative optimization through platform integration for extracting embroidery texture features, generating precise semantics, and maintaining the structural and color fidelity of the original images. This generation pipeline is deployed on a cloud server, featuring a hardware configuration of a 32-core AMD EPYC 7542 processor (main frequency: 2.9 GHz) and an NVIDIA GeForce RTX 4090D graphics card (48GB video memory). The operating system is Ubuntu 22.04. The deep learning framework is PyTorch 2.7.0, which comes with version 12.6 of the Compute Unified Device Architecture (CUDA). Under the above circumstances, it takes only approximately 50 seconds to generate a single image in the style of Cantonese embroidery with a resolution of 2048×2048.

The input images are sourced from online networks and are free of copyright issues; the flowchart is drawn by the authors.

Construction of the Cantonese embroidery image dataset

Cantonese embroidery works possess high artistic value, but data collection channels are limited. The image quality available on websites falls far short of meeting the requirements for Cantonese embroidery simulation tasks and could only be supplemented through offline means. The data for this study primarily come from an enterprise and an inheritor of Cantonese embroidery. One is Guangzhou Embroidery Craft Factory Co., Ltd41, which is the only time-honored production enterprise in the Chinese Cantonese embroidery industry. Its collection has both historical inheritance and industry representativeness. The second is the collection provided by Wang Xinyuan42, a municipal-level representative and inheritor of the national intangible cultural heritage of Cantonese embroidery. The collections from the above-mentioned sources all possess genuine academic value and authoritative representativeness, laying a foundation for the reliability of the research. Owing to the particularity of Cantonese embroidery works—all of which are framed and preserved in indoor exhibition halls with lighting provided by exhibition hall lamps—images can only be captured using mobile phones or cameras. Consequently, issues such as uneven exposure and insufficient clarity arose during the collection process, with examples illustrated in Fig. 4.

These pictures are provided with permission by Guangzhou Embroidery Craft Factory Co., Ltd.

To address issues in the original collected data, such as semantic redundancy, exposure anomalies, and insufficient clarity, data screening, cropping, and optimization were conducted. Images with excessively poor quality or blurred textures were directly discarded. For semantically complex Cantonese embroidery images, cropping was performed to divide them into multiple sub-images. For images with exposure anomalies, a coarse-to-fine deep neural network model based on the Laplacian pyramid43 was employed. This model performs global color correction and local detail enhancement through multiscale decomposition. Ultimately, a high-quality Cantonese embroidery image dataset was established, as shown in Fig. 5, comprising 494 images characterized by high definition, balanced lighting, simple semantics, and diverse categories. The dataset includes eight major categories: flowers, birds, plants, animals, landscapes, fruits and vegetables, mountains and rivers, and architecture. These categories are further divided into 45 subcategories.

These pictures are provided with permission by Guangzhou Embroidery Craft Factory Co., Ltd.

To provide reliable semantic guidance for LoRA training, a dedicated image labeling step was implemented beforehand. This study employed the WD14-tagger framework44 for semantic annotation of images, utilizing the wd-vit-v3 model for initial label parsing with a confidence threshold of 0.4 to filter out low-confidence labels. However, due to the thematic specificity of Cantonese embroidery images, certain labels did not accurately reflect the intended semantics and required manual correction. For example, “hen,” “rooster,” and “peacock” were incorrectly generalized as “bird,” “kapok” was oversimplified as “flower,” and “lychee” was oversimplified as “fruit.” Such mislabeling hindered semantic alignment in subsequent image generation processes.

In addition to misidentification, some labels were missing, such as “tree branches.” Moreover, the embroidery technique for bird claws is distinctive, typically involving short horizontal stitches in the lezhen style, which required special annotation. Furthermore, a unified label, “fine silk texture,” was added to all images. This label captures the high-frequency textural characteristics of silk material, thereby enhancing the generative model’s perceptual accuracy of the embroidery material. Its stable texture pattern also provided auxiliary visual guidance during LoRA training, facilitating faster convergence. Table 1 presents three representative cases of manually assisted label correction for Cantonese embroidery images extracted in this study.

LoRA training

This study utilized a dataset of 494 Cantonese embroidery artworks covering 45 thematic categories, all of which were precisely annotated with semantic labels. A phased, progressive fine-tuning strategy was adopted to optimize the generative model. Stable Diffusion 1.5 served as the base model, with targeted fine-tuning conducted through LoRA (Low-Rank Adaptation), a parameter-efficient method. The primary advantage of LoRA lies in its plug-and-play capability—during training, only a small set of low-rank matrix parameters is optimized while the base model weights remain frozen. After training, the low-rank adapter can be directly integrated with the original model, enabling immediate adaptation for Cantonese embroidery style generation without additional adjustments. This greatly enhances the flexibility and efficiency of model deployment.

This study employed SD-Trainer45 as the training platform for LoRA, which performs end-to-end parametric training by encapsulating the underlying computational processes. Training was conducted in expert mode, and Table 2 presents detailed information on the model training parameters.

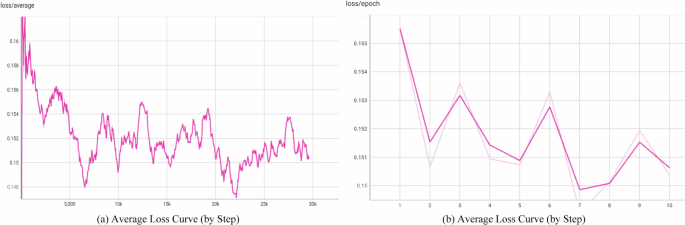

TensorBoard.dev was used to visualize the relationship between loss values and training epochs during the LoRA training process, clearly illustrating the learning progression. As shown in Fig. 6(a), with training steps on the horizontal axis and loss values on the vertical axis, the complete training process consisted of 29,640 steps. In the early training stages, the loss values decreased rapidly, reflecting fast convergence. However, during later stages, the loss values exhibited fluctuations while gradually converging, which can be attributed to the large-scale and semantically complex nature of the dataset, making complete convergence difficult. Nonetheless, Fig. 6(b) reveals an overall convergent trend, with loss values stabilizing at relatively low levels by the 8th epoch. Both figures indicate that, despite some fluctuations, the overall downward trend of the loss values reflects progressive model convergence and optimization. These observations preliminarily confirm that the experimental output of the LoRA model met the required precision standards for deployment.

a Average loss curve (by step); b Average loss curve (by step).

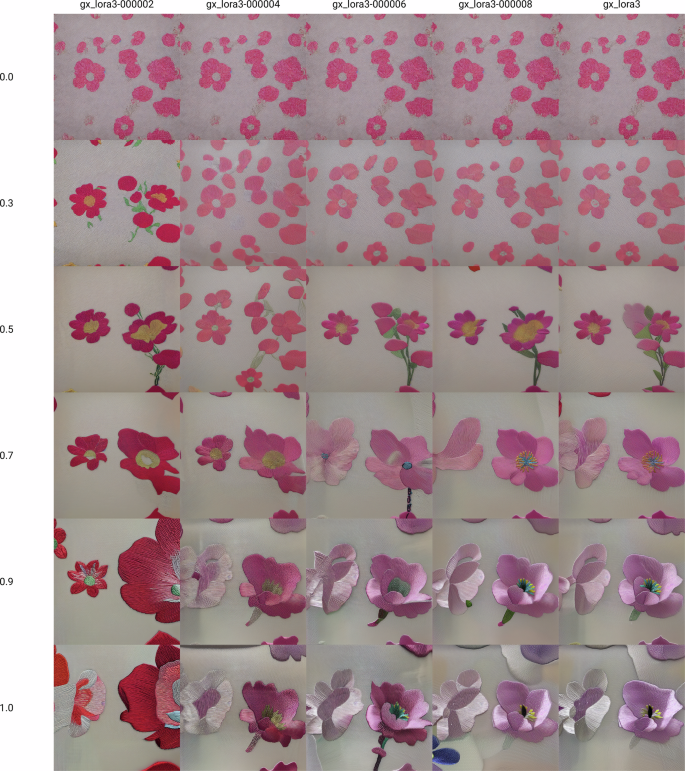

During training, LoRA models were saved at the 2nd, 4th, 6th, 8th, and 10th epochs. Cross-combination experiments were then conducted with weight values of 0.0, 0.3, 0.5, 0.7, 0.9, and 1.0. Comparative analysis of generated Cantonese embroidery-style floral images revealed that higher epochs and larger weights produced more pronounced texture effects (Fig. 7). The LoRA model from the 8th epoch, combined with a weight of 0.9 demonstrated optimal artistic performance, generating images that preserved clear floral morphological structures while faithfully reproducing the unique silk texture and stitching patterns characteristic of Cantonese embroidery. The results also displayed natural, soft color transitions consistent with traditional Cantonese embroidery. By contrast, the 10th epoch with a weight of 1.0 enhanced stylized features excessively, resulting in exaggerated embroidery textures and localized detail distortion. Conversely, lower epochs (2nd–6th) or smaller weights (<0.7) failed to adequately reproduce silk luster and complex stitching techniques.

All the generated images are from LoRA trained by the authors. The figure is drawn by the authors.

These findings indicate that a balance between style enhancement and detail fidelity is essential in Cantonese embroidery style generation. The 8th epoch with a weight of 0.9 achieved this balance, sufficiently showing the artistic characteristics of Cantonese embroidery while maintaining natural visual harmony. Accordingly, this study adopted the LoRA model from the 8th training epoch for parameter fine-tuning of the diffusion model.

Conditional guidance for Cantonese embroidery image generation

In the Stable Diffusion-based image-to-image generation pipeline, input prompts can effectively guide the semantic expression of generated content. However, output images still display considerable randomness in semantic layout, overall geometric structure of patterns, and color distribution. To achieve consistency between generated images and input reference images in terms of semantic correspondence, geometric shapes, and color distribution—while introducing only controlled adjustments to texture style—this study incorporates two prior constraints during the denoising process of the diffusion model. This approach allows precise regulation of the denoising process to accomplish these objectives.

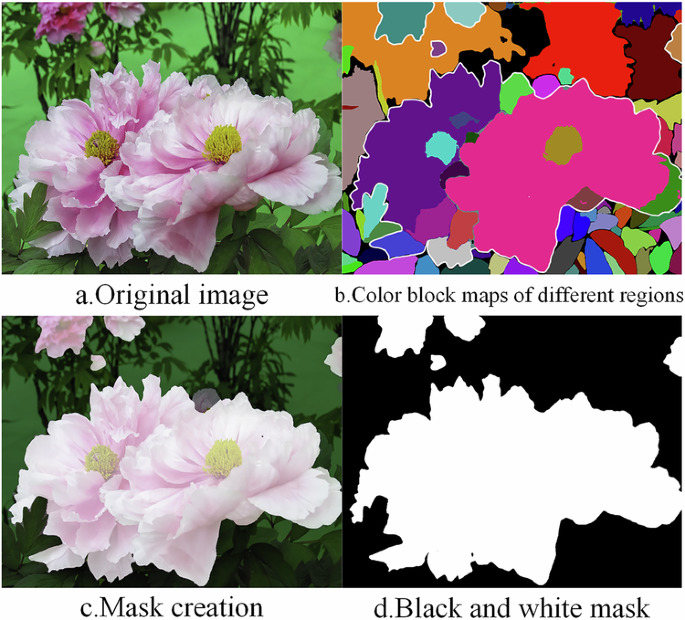

The first constraint focuses on semantic image segmentation and consistency enforcement based on the Segment Anything Model (SAM). In the process of generating Cantonese embroidery-style images, distinguishing complex semantic regions in input images poses a significant challenge. To address this, a segmentation module was introduced for refined processing. First, the SAM was employed to perform pixel-level semantic segmentation on the input image, decomposing it into multiple semantic regions:

$$R\,=\,\left\{{R}_{1},{R}_{2},…{R}_{n}\right\}$$

(1)

Here, each region corresponds to a specific semantic block, and a unique color identifier is assigned to these semantic regions via the color coding function defined in Eq. (2), as shown in Fig. 8b:

$$C:{R}_{i}\mapsto {c}_{i}\in {{\mathbb{R}}}^{3},\,{c}_{i}=\left({r}_{i},{g}_{i},{b}_{i}\right)$$

(2)

a Original image. b Color block maps of different regions. c Mask creation. d Black and white mask.

Interactively selecting multiple color-coded regions within the image, masks corresponding to the target semantics are generated. For example, Fig. 8c shows the selection of all color regions associated with floral elements, namely, the areas enclosed by the white outlines in Fig. 8b, resulting in the corresponding flower mask. Finally, the masks are converted into binary mask images (Fig. 8d), where white areas represent the target generation regions while black ones remain unmodified. These SAM-derived binary masks explicitly restrict the diffusion denoising process within the delineated target semantic regions while suppressing noise interference and unintended content generation in the nontarget (black) regions. Through multiple generations with masks covering different regions, this approach achieves precise semantic generation in complex areas, ensuring clear boundaries between distinct semantic regions and accurate content representation. Notably, these semantic masks are applied throughout the entire diffusion process, and their functional focus varies across denoising stages to support subsequent ControlNet guidance.

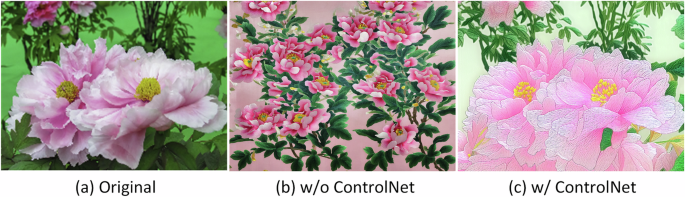

The second constraint centers on geometric structure and color consistency control with the aid of ControlNet. To ensure precise alignment between generated images and reference inputs in both contours and color space, this study augmented the original diffusion model with ControlNet for fine-grained denoising guidance. Unlike SAM masks, which focus on spatial region restrictions, ControlNet acts as a semantic guidance module, providing directional constraints for the denoising process to ensure the generated content conforms to the geometric structure and color characteristics of the input reference image. The core mechanism encodes LineArt, Depth, and Color priors from the input image into conditional tensors, enabling the model to produce structurally and chromatically consistent outputs. Fig. 9 shows the comparison of the effects of the image with flowers, leaves, and a simple background with and without ControlNet. It can be seen that both images (b) and (c) have flowers and leaves. However, without the effect of ControlNet, image (b) cannot maintain the same spatial structure and color distribution as the original image. However, image (c) can, although local semantic ambiguities still need to be refined through SAM-based segmentation networks.

c w/ControlNet aligns with (a) Original (contour overlap rate >85%), whereas (b) w/o ControlNet shows <50% structural consistency.

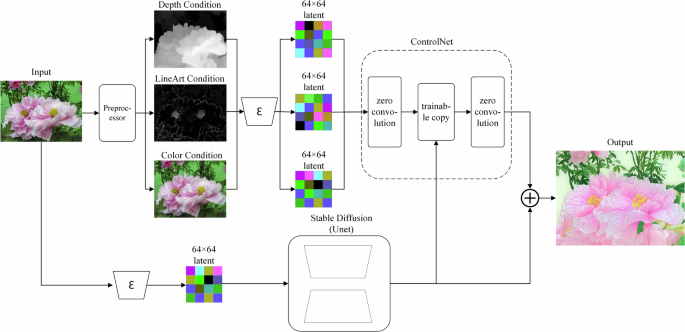

Since Cantonese embroidery is a form of realistic art, this study further adopted Depth, LineArt, and Color conditions to guide the model in incorporating lighting, contour structure, and color characteristics of the original image during the denoising process. Each condition regulates the diffusion process in complementary ways. The Depth condition extracts geometric information from the original image to preserve spatial structure—brighter areas appear closer, while darker areas recede. LineArt condition enforces alignment of generated edges with those of the input image, ensuring contour accuracy. Color condition detects the color distribution of the original image and ensures the generated output reflects a similar distribution. These conditions do not directly manipulate Stable Diffusion. Instead, partial weights are copied from the original UNet encoder, and cross-layer feature fusion with the UNet backbone is achieved via zero-convolution layers. Each condition is separately trained before integration into the original Stable Diffusion. This mechanism ensures semantic consistency while enabling controllable transfer of pixel-level attributes. The incorporation of these three conditions allows the model to pursue the unique texture characteristics of Cantonese embroidery while significantly improving the high-fidelity reproduction of geometric structures and color features from the original image, as depicted in Fig. 10.

The input image is processed through separate preprocessors to extract Depth, LineArt, and Color conditional information, which are then encoded as 64 × 64 feature maps to match convolutional metrics. These conditions are incorporated through zero-initialized convolutional layers and fed into a trainable copy of the network. Finally, the network outputs are adjusted via zero convolutions and fused with the original network outputs to generate the target image.

The three conditions—Depth, LineArt, and Color—do not employ fixed intensities. For semantic content with complex contour structures, the LineArt control intensity is typically set above 0.6 to ensure accurate reproduction of morphological details; for simpler contours, it is set below 0.6 to allow the model greater freedom to refine local details autonomously. For example, an intensity above 0.6 is applied when generating images with intricate leaf clusters to render the interlaced contours of overlapping leaves clearly. In contrast, a value below 0.6 is preferred for simple shapes such as a single banana—an intensity over 0.6 would excessively extract non-contour internal lines, restricting the model and compromising the natural rendering of internal details. Similarly, the depth control intensity increases above 0.6 in scenarios requiring clear spatial hierarchy but decreases below 0.6 when the three-dimensional structure is less critical. In contrast, the color control intensity is generally set to a relatively high range of 0.8–0.9, which not only extracts color information from the original reference image but also helps the model better understand the underlying semantic content—for example, in regions where flowers and leaves overlap, color cues effectively assist the model in distinguishing the boundaries between different semantic objects. These complementary conditions, when combined, achieve precise restoration of the original image information. Notably, the control intensity of ControlNet is generally not set to the maximum value of 1.0. This is because an intensity of 1.0 would excessively constrain the generation process, forcing the model to mechanically replicate the contours of condition maps (e.g., LineArt maps, depth maps) while sacrificing its ability to generate natural textures and reasonable details. Consequently, the outputs tend to appear rigid and unnatural and may even be affected by “ghosting artifacts” derived from the condition maps.

In synergy with ControlNet guidance, the SAM semantic masks exert stage-specific effects during diffusion: (1) In the early diffusion stages with high noise levels, the masks primarily fulfill the role of region delimitation, ensuring that all denoising updates are confined within the target semantic regions and preventing the generation of off-target content that deviates from the input reference. (2) In the middle and late diffusion stages with low noise levels, the masks cooperate with the Depth, LineArt, and Color priors of ControlNet to refine the local details of the target regions, making the generated Cantonese embroidery patterns both spatially accurate and semantically consistent with the input reference image.

Integrated Cantonese embroidery artistic-style image generation system based on ComfyUI

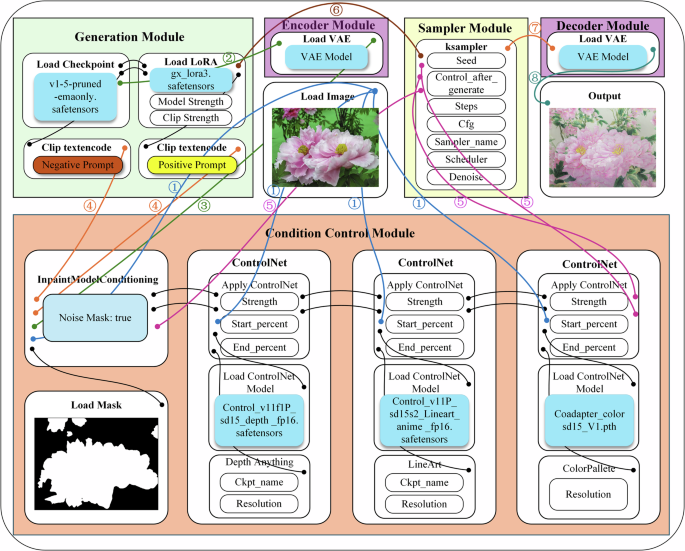

Figure. 11 illustrates the image-to-image workflow for generating artistic Cantonese embroidery styles in this study, implemented on the ComfyUI platform. This platform uses a node-based visual programming interface, enabling the flexible combination of various generative components, such as Stable Diffusion. The workflow consists of five core modules: the generation module, encoder module, condition control module, sampler module, and decoder module. The parameter configurations for the relevant modules are detailed in Table 3. The modular design of ComfyUI allows each component to be independently debugged and optimized, thereby significantly improving development efficiency. While preserving the original image content, this workflow enables accurate simulation of the Cantonese embroidery artistic style.

The system constructs a complete production pipeline beginning with the generation module, which includes the base denoising model. The encoder module enables processing in latent space, while the sampler module performs precise image denoising under the guidance of the condition control module. Finally, the decoder module reconstructs the latent variables into normal images for output. Lines with numbers represent the transmission of data streams.

As shown in ① in the figure, the input image is passed to the mask conditioning module to match the mask and to three ControlNets for extracting depth, LineArt, and color information. The condition control module is designed to provide control conditions for the denoising process, as shown in ⑤ in the figure. The mask controls the semantic regions involved in denoising, whereas ControlNets extracts spatial structure, contours, and color information from the original image to guide denoising. These complementary conditions collectively achieve precise restoration of the original image’s information.

Since the denoising process of the diffusion model operates entirely within the latent space, to ensure that the model can accurately locate the regions to be modified within this latent space, the VAE46 encoding module, as illustrated in ② and ③ of the figure, performs encoding transformation on the mask image according to the latent space scale adapted to the diffusion model, thereby providing clear spatial operation guidance for the model.

The generation module comprises the base stable diffusion model, the LoRA fine-tuned model, and positive/negative CLIP text encoders. It is based on the pretrained stable diffusion v1-5 model (v1-5-pruned-emaonly.safetensors), with stylized generation achieved through the integration of a LoRA adapter (gx_lora3-000008) optimized for the Cantonese embroidery style. CLIP text encoding further guides image generation. Positive prompts include both semantic cues and core Cantonese embroidery feature descriptors such as “fine silk texture”, “silk sheen”, and “precise stitching”. Negative prompts such as “low quality”, “blurry”, and “text” are applied to avoid common defects. As shown in ④ in the figure, the prompt words are converted into feature vectors by the text encoder, which are then fused with other constraint signals in the condition control module, including the region information marked by the mask and the spatial features extracted by ControlNet. This produces a unified generation target signal, ensuring that the model not only adheres to the textual description but also meets precise requirements at the spatial and regional levels.

The K-Sampler operates based on the base model parameters provided by the generation module (as shown in ⑥) and the constraint signals output by the condition control module, performing an iterative denoising process in the latent space. It progressively refines the initial noise vector under the combined guidance of the base model (and its LoRA adaptation) and multisource conditions (text semantics, mask regions, and ControlNet spatial features), ultimately generating the target latent variable representation.

The latent variables denoised by the K-Sampler are decoded into a normal output image via the decoder, as illustrated in ⑦ and ⑧ of the figure. As a pair of inverse operations, encoding and decoding serve to confine all key denoising processes within the latent space, thereby enhancing computational efficiency while ensuring generation quality.

link