Experimental environment

All experiments were conducted on a single NVIDIA RTX 4090 GPU (24GB VRAM), paired with an Intel i9-14900K CPU (32 cores), 64GB DDR5 RAM, and a 1.8TB NVMe SSD. The software environment included Ubuntu 22.04.3 LTS, CUDA 11.7, cuDNN 8.5, and PyTorch 2.0.0. Under this setup, the peak GPU memory usage remained below 18.3GB during training, confirming the model’s efficiency on standard workstation hardware without requiring multi-GPU clusters.

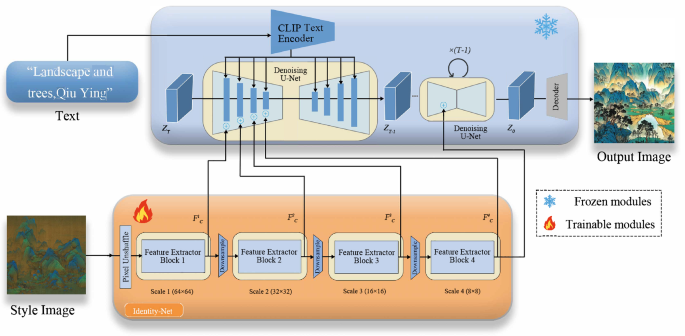

In our experiments, it enabled full parameter fine-tuning with a peak memory usage of only 18.3GB, making it highly suitable for single-GPU deployment. Moreover, Stable Diffusion 1.5’s U-Net architecture has been widely validated in art-related tasks, including art style transfer. Textual Inversion learns pseudo-word embeddings from 3-5 images to map visual concepts into text space for zero-shot adaptation61, while language-diffusion synergy integrates pretrained language models with diffusion architectures to enhance semantic alignment and photorealism , demonstrating efficient customization and high-fidelity generation38.

Painting-42

Collection. Despite the existence of numerous art datasets, such as VisualLink62, Art500k63, and Artemis64, that facilitate AI learning, there is a scarcity of datasets specifically focused on Chinese paintings from distinct historical eras with unique styles or techniques. To advance the development of Chinese painting within the AI learning field, it is essential to construct more accurate and comprehensive datasets tailored to these specific contexts. To address this gap, we meticulously curated a dataset of 4,055 Chinese paintings spanning various historical periods and diverse artistic styles, sourced from online platforms and artist albums. The resolution distribution for these paintings is detailed in Fig. 2. To ensure the stability and accuracy of the data, and to alleviate issues such as image blur, detail loss, distortion, or noise amplification, we standardized all paintings to a consistent resolution of \(512 \times\)512. This resolution is consistent with the training dataset specifications of the SD model, ensuring model stability and output quality. Deviations from this resolution could potentially compromise the fidelity of the generated outputs. Throughout the standardization process, we placed significant emphasis on preserving the distinct painting styles, compositions, and aesthetic features characteristic of each historical era. Larger paintings were carefully cropped and segmented to ensure that each segment captured the essence of the original artwork. By doing so, we aimed to create a high-quality dataset that would facilitate the development of AI models capable of generating authentic and diverse Chinese paintings.

This table shows the size of the short edge of the images in the elemental data we collected, revealing that resolutions are predominantly concentrated between 400 and 3100 pixels. Notably, a significant number of these images exceed dimensions of \(512 \times 512\) pixels.

Painting-42 is classified based on the styles of 42 famous Chinese painting painters with historical influence, including artworks from six different dynasties, reflecting different style distributions.

Electronic replicas of Chinese paintings often fail to capture the intricate details and nuances found in the originals. To address this limitation, we meticulously adjusted the image parameters to enhance the painting features while minimizing noise. This process involved careful screening to remove low-resolution and redundant works, resulting in a curated collection of 4,055 high-quality Chinese painting images. These images cover various categories and styles of ancient and modern Chinese art, including blue-green landscapes, golden and blue-green landscapes, fine brush painting, flower and bird painting, etc. They also feature characteristic techniques such as plain drawing and texture strokes, showcasing the unique artistic traditions of Chinese painting. Each image was selected to ensure it authentically represents its respective era, enabling comparisons between painters and their unique styles or techniques. This curated dataset serves as valuable material for advancing machine learning research and facilitating the preservation and innovation of Chinese painting art in the digital age. Notably, the dataset includes works from 42 renowned Chinese artists that span seven different dynasties in Chinese history, providing a comprehensive representation of the evolution of Chinese painting styles. This initiative marks the creation of the first Chinese painting style dataset tailored specifically for T2I tasks. The distribution among dynasties within the dataset is visualized in Fig. 3, illustrating the breadth and depth of the collection. By making this dataset available, we hope to contribute to the development of more sophisticated machine-learning models that can appreciate and generate Chinese paintings with greater accuracy and nuance.

Copyright compliance was a fundamental consideration in the construction of the Painting-42 dataset. The dataset primarily consists of ancient Chinese paintings that are in the public domain, sourced from open-access museum collections and archives. According to the Copyright Law of the People’s Republic of China (2020 Amendment)65, copyright protection for artworks expires 50 years after the death of the author. As the dataset exclusively includes works that have far exceeded this protection period, their use is fully compliant with legal requirements. No copyrighted modern artworks were included, ensuring that all images in the dataset are legally available for academic and research purposes.

Labels. To streamline the T2I generation process, we opted to extract elements and features of Chinese paintings from painting names and reannotate them using natural language, rather than annotating the paintings directly. The annotation workflow is illustrated in Fig. 4. Initially, we used BLIP266 for annotation and applied a filtering method (CapFilt) as an experimental approach. CapFilt leverages dataset guidance to filter out noisy and automatically generated image titles. However, after conducting a manual review and comparison, we identified areas where this method could be improved, particularly in accurately identifying free-hand or abstract expression techniques common in Chinese painting. To improve the quality of annotations, we consulted experts in Chinese art history and Chinese painting. We have improved annotations specifically for these types of images through detailed manual adjustments. Key enhancements included appending keywords such as “Chinese painting”, “era”, and “author” to each image description, highlighting core stylistic features of Chinese painting. Furthermore, we categorized distinct popular painting techniques associated with painters from different eras, ensuring the model could accurately distinguish and recognize unique expressive techniques characteristic of each era painter. Ultimately, we organized these annotated text-image pairs into a structured JSON file format for seamless integration into our T2I generation pipeline.

The image processing workflow for Painting-42 involves several steps. Initially, raw images underwent captioning using BLIP CapFit to generate adaptive subtitles, which were meticulously validated and corrected. Additionally, manual cropping and enhancement techniques were applied to the original images to achieve uniformly sized \(512 \times 512\) images along with corresponding labels.

Quantitative evaluation

All stylized reference images used for demonstration and comparison (including those in qualitative comparisons with DALL\(\cdot\)E 3 and MidJourney) were strictly drawn from the held-out test set and were never involved in the model’s training process.

Our objective in assisted design is to ensure that the Chinese paintings generated by our model exhibit harmonious layouts, vibrant colors, delicate brushwork, and a cohesive composition that balances diversity with stability, all guided by the provided theme description. To achieve this goal, we have chosen metrics that primarily assess the coherence between text descriptions and the aesthetic quality of the generated images. To evaluate the alignment between text and images, we employ a suite of metrics, including CLIP Score70, LPIPS71, FID72, Visual Text Consistency(VTC), Accurate Style Learning(ASL), Aesthetics Preference(AP), and Creativeness. CLIP Score offers a comprehensive evaluation that considers factors such as color fidelity, texture, contrast, and clarity, translating these evaluations into numerical scores to assess overall image quality. LPIPS utilizes feature extraction and similarity calculation to provide a nuanced evaluation of image quality and similarity, utilizing deep learning techniques to accurately assess visual fidelity. FID is used to evaluate the quality and diversity of generated images in generative models, especially in image generation tasks. It works by comparing the distribution of generated images with real images in a specific space. VTC is a crucial criterion for assessing model performance, as it ensures that the generated image accurately represents the details specified in the input text. The assessment of ASL focuses on evaluating the model’s ability to authentically replicate the style of a specific artist. The AP exam assesses the model’s capacity to generate visually appealing images while adhering to essential artistic principles. Creativeness emphasizes the evaluation of imaginative image generation that showcases a unique style.

We conducted a comprehensive comparative analysis of our PDANet method against state-of-the-art models, including DALL-E 3, Midjourney, Midjourney + reference, DreamWorks Diffusion and PuLID-FLUX, and presented empirical evidence to demonstrate our advances. To ensure a thorough evaluation across diverse categories and difficulty levels of text descriptions, we randomly selected eight prompts from PartiPrompts73, a dataset comprising over 1,200 English prompts. For each prompt, we generated 50 painting-style images, and the final scores were averaged across all datasets to provide a robust assessment. The results, summarized in Table 1, showcase PDANet’s impressive performance metrics. Notably, PDANet attained a CLIP Score of 0.8147, the highest among all evaluated methods, demonstrating its exceptional ability to generate images that align with the input text descriptions. In terms of LPIPS, PDANet scored 0.5519, substantially surpassing other models and underscoring its strength in producing visually coherent images. In terms of FID, PDANet scores 2037, the best score among all models, demonstrating its excellent performance in terms of generated image quality. In the VTC, ASL, AP, and Creativeness evaluations, 60.6%, 68.2%, 53.0%, and 56.1% of users selected images generated by the PDANet method, significantly surpassing the proportion of users choosing images from other models.

Prompts serve as controls randomly selected from a partial prompt corpus, incorporating style image and depth images as painting-style conditions. Our method is compared with images generated by state-of-the-art models, including DALL-E 3, Midjourney, Midjourney + reference, DreamWorks Diffusion, and PuLID-FLUX.

These findings collectively underscore PDANet’s remarkable capability to align generated images with input text descriptions, confirming its state-of-the-art status in producing painting-style images. The empirical evidence presented in this study validates the effectiveness of our proposed approach and highlights its potential for applications in T2I synthesis.

Qualitative analysis

In this study, we primarily aim to extract seven key prompt words from the PartiPrompts corpus to generate images in various Chinese painting styles. We will then apply these prompts to multiple models, including DALL-E 3, Midjourney, Midjourney + reference, DreamWorks Diffusion, PuLID-FLUX, and PDANet (Ours) model, to evaluate their performance.

Comparative analysis of our method against the state-of-the-art methods.

Through systematic experiments and analyses, as illustrated in Fig. 5, we evaluated various methods for generating images in the style of Chinese paintings. Although the DALL-E 3 method displays certain features of this style, the artistic quality of its generated images is somewhat constrained. In contrast, the Midjourney and Midjourney + reference methods tend to produce more realistic images rather than true ink art renderings. The DreamWorks Diffusion and PuLID-FLUX methods generate images rich in detail, yet they struggle to replicate the stylistic traits of traditional artists accurately. Our proposed model framework utilizes a multimodal approach, integrating multiple modalities to extract and simulate essential stylistic elements of Chinese paintings, such as brushstroke features, visual rhythm, and color palette. Compared to these other methods, our approach offers enhanced learning capabilities and greater generation stability, enabling it to capture the complex and subtle characteristics of the Chinese painting style, resulting in more accurate and aesthetically pleasing images.

By using multiple conditions as control variables and inputting diverse prompts, we conduct ablation studies to compare and primarily assess the complexity of the images generated by PDANet (Ours).

As illustrated in Fig. 6, we conducted comparative experiments against several well-established approaches, including LoRA (Low-Rank Adaptation), ControlNet, and IP-Adapter, to comprehensively evaluate the efficiency, controllability, and generation quality of our proposed method. The results, summarized in Fig. 1 of the revised manuscript, reveal the following key observations: LoRA significantly improves training efficiency due to its parameter-efficient design. However, it exhibits clear limitations in maintaining style consistency and fine-grained detail fidelity, especially in challenging generation tasks involving complex human poses. IP-Adapter demonstrates strong controllability but often suffers from reduced diversity and noticeable detail loss in generated images, limiting its applicability in scenarios requiring high-fidelity generation. ControlNet offers precise conditioning but imposes higher computational overhead and resource consumption during training and inference. In contrast, our proposed framework achieves a better balance between image quality, style consistency, and efficiency. It consistently generates photorealistic and style-consistent results while maintaining manageable computational costs, making it more practical for real-world deployment.

This study conclusively demonstrates the superiority of PDANet in comprehending and interpreting deep-level cue words, thereby establishing a novel theoretical and practical foundation for stylization generation in the T2I field. By leveraging its advanced capabilities, PDANet sets a new benchmark for T2I synthesis, enabling the generation of highly stylized and contextually relevant images that accurately capture the essence of the input text.

Our framework is style-agnostic and applicable to diverse art forms. While this study focuses on Chinese paintings due to dataset accessibility and their complex textures, the model design does not embed any bias toward Chinese art. Modules like the style attention layer and multi-scale fusion are based on generic visual feature modeling, enabling adaptation to various textures, compositions, and brushstroke patterns. By replacing or expanding the dataset, the framework can be fine-tuned for other styles such as Western oil paintings or modern digital art without architectural changes.

Ablation study

In this paper, we aim to utilize several low-cost and sample adapters to dig out more controllable ability from the SD model while not affecting their original network topology and generation ability. Therefore, in this part, we focus on studying the manner of injecting multiple conditions and the complexity of our T2I-Adapter. The results are summarized in Table 2, where PDANet (Ours) demonstrates excellent performance indicators. Among them, PDANet’s CLIP Score is 0.8844, the highest among all evaluation methods. In terms of LPIPS, PDANet scored 0.4763, far exceeding the images generated by other methods. In terms of FID, PDANet scored 774, the best among all methods, demonstrating its outstanding performance in generating image quality. In the VTC, ASL, AP, and Creativeness evaluations, 62.1%, 65.2%, 63.6%, and 59.1% of users selected images generated by the PDANet method, far exceeding the proportion of users who selected images from other methods.

As shown in Fig. 7, we conducted a comprehensive evaluation of model performance using style images for ablation studies, and evaluated multiple methods for generating image styles in response to different cue words. The results demonstrate that PDANet exhibits exceptional proficiency in interpreting diverse prompt instructions, particularly excelling with complex and lengthy descriptions. It consistently generates Chinese painting-style images that faithfully match the given instructions, maintaining high fidelity in visual style while achieving notable strides in visual text consistency, accurate style learning, aesthetics preference, and Creativeness. Notably, PDANet’s performance is characterized by its ability to deliver consistently satisfactory results, showcasing its robustness and reliability in generating high-quality images that meet the desired standards. The model’s capacity to accurately interpret and respond to varied prompt instructions underscores its potential for applications in T2I synthesis, where the ability to generate diverse and contextually relevant images is paramount.

We first performed the ablation study to verify the effectiveness of each core module and determine the optimal combination of components and hyperparameters. After finalizing the model configuration based on the ablation results, we conducted the quantitative evaluation (Sec. 4.3) to benchmark the optimized model against baselines.

User study

The Figure presents questionnaire cases evaluating visual text consistency (VTC), accurate style learning (ASL), aesthetics preference (AP) and creativeness. In this case, (A) represents DALL-E 3, (B) represents Midjourney, (C) stands for Midjourney + reference, (D) depicts DreamWorks Diffusion, (E) depicts PuLID-FLUX and (F) represents PDANet, our proposed method.

In this user research evaluation, we conducted a comprehensive analysis of the images generated by the model across four key dimensions: visual text consistency, accurate style learning, aesthetics preference, and creativeness. To assess the model’s performance, users were asked to select the work that best aligned with these evaluation criteria from images generated by six different models. Visual text consistency was a crucial aspect of the evaluation, as it assesses the model’s ability to maintain thematic coherence in design creation, similar to evaluating a designer’s skill in this regard. This dimension forms a fundamental criterion for evaluating model performance, as it ensures that the generated images accurately reflect the content specified in the input text. By prioritizing visual text consistency, we can guarantee that the model produces images that are not only aesthetically pleasing but also contextually relevant and faithful to the original text.

Secondly, the assessment of accurate style learning aims at evaluating the model’s capability to faithfully replicate the style of a particular artist. This is achieved by measuring the similarity between the images generated by the model and the original works of the artist. In terms of aesthetics preference, a comprehensive quality assessment of the generated Chinese painting style images is conducted based on artistic principles, including composition, arrangement of elements, and visual expression. This dimension examines whether the model can produce visually appealing images while adhering to fundamental artistic principles.

Finally, in evaluating the creativeness, we focus on striking a balance between creativity and stability to generate imaginative images with unique styles. Our experimental results reveal that incorporating reference images as control conditions tends to constrain the model’s creativity, often compromising the delicate balance between creativity and stability in the generated images. To comprehensively assess the performance of PDANet in terms of style creativity and stability, we conducted a comparative analysis with state-of-the-art models, including DALL-E 3, Midjourney, Midjourney + reference, DreamWorks Diffusion, and PuLID-FLUX. This evaluation enables us to rigorously examine the strengths and weaknesses of PDANet in achieving a harmonious balance between creativity and stability.

Our research sample includes 52 survey questionnaires from 12 cities in China, among which 35 participants have art education backgrounds and a profound appreciation for Chinese painting art. This ensured the evaluation’s professionalism and reliability. As illustrated in Fig. 8, we labeled the generated images from six models as follows: (A) DALL-E 3, (B) Midjourney, (C) Midjourney + reference, (D) DreamWorks Diffusion, (E) PuLID-FLUX and (F) PDANet (Ours).

The user study comparing PDANet with state-of-the-art methods reveals user preferences. Here, we introduce four metrics for evaluating generative models in design tasks: visual text consistency, accurate style learning, aesthetics preference and creativeness. The questionnaire content corresponding to these metrics is detailed in Fig. 7.

In terms of visual text consistency, the image generated by PDANet more accurately captured the essence of the keywords provided, while Models (A), (B), (C), (D) and (E) exhibited varying degrees of deviation in replicating the art style. This disparity highlights the superiority of PDANet in terms of style fidelity. In terms of accurate style learning, we assessed each model’s capacity to learn and reproduce the style inspired by the works of the renowned Ming Dynasty painter Tang Yin. Characterized by exquisite elegance and delicate, serene brushwork, Tang Yin’s paintings are a hallmark of Chinese art. Notably, PDANet demonstrated a high level of consistency with Tang Yin’s style in terms of visual aesthetics and brushstroke quality, underscoring its ability to capture the nuances of artistic expression. In evaluating aesthetics preference, option F consistently exhibits a cohesive and visually striking expression throughout the creative process, showcasing a deep interpretation of the prompt “a painting of a bird, the paint by tang yin.” This option aligns closely with the respondents’ aesthetic expectations and demonstrates superior artistic merit compared to the alternatives. Ultimately, we conducted a thorough analysis of the stylistic effects of each model in generating various images, emphasizing content and style creativity. Our findings indicated that the first five models struggled to accurately capture the unique characteristics of Chinese paintings from specific periods, exhibiting notable limitations in creativeness, particularly concerning brushstrokes. In contrast, PDANet consistently delivered creative and high-quality outcomes, effectively embodying the aesthetic nuances of painting styles, composition, and imagery from diverse eras.

As illustrated in Fig. 9, the statistical data reveal that PDANet has garnered significant user preference and widespread recognition for its graphical consistency, stylistic precision, and aesthetic appeal. The results indicate that PDANet’s output is considered more visually appealing and style-consistent than other options, emphasizing its ability to meet the aesthetic expectations of respondents. The user preference results reveal that, across the four dimensions of VTC, ASL, AP, and Creativity, 60.6%, 68.2%, 53.0%, and 56.1% of users favored option F, respectively. This underscores PDANet’s advantage in maintaining stylistic stability in images. Such a strong preference magnitude highlights PDANet’s superiority in generating stable and visually appealing images that align with user aesthetic expectations.

Generated showcase

Generated images in various styles by using the same depth image and reference images from different painters, following the method outlined in this paper. These images show a strong identity of the painter’s style, and each style is unique and clear at a glance-for example, the painting styles of Shen Zhou, Tang Yin, and Wang Hui.

Figure 10 shows the Chinese paintings generated by the proposed method. These paintings have the painting-styles of Shen Zhou, Tang Yin, and Wang Hui. These generated works strictly follow the aesthetic principles of their respective styles, while achieving extremely high richness and refinement in detail expression. Specifically, the outline lines, the use of modeling techniques and the precise coloring of the colors in the picture are all displayed clearly and vividly, fully demonstrating the unique artistic style of each style and accurately capturing the core charm of traditional Chinese painting art.

It is worth noting that although these three Chinese paintings were created based on the use of the same depth image, the final results generated are each unique, demonstrating excellent stylistic painting capabilities. The shape of the trees and the distribution of leaves in the picture are accurately depicted, forming a good visual hierarchy and sense of space. The successful application of this method marks its feasibility in practice. It not only reduces the technical difficulty in the creative process but also significantly improves the efficiency of creation, allowing designers to focus more on creativity and inspiration.

link