Related work

Artwork restoration encompasses two primary approaches: manual restoration and digital restoration using computer technology for image synthesis. Both methods play crucial roles in preserving the integrity and beauty of art pieces, each with its unique set of tools, techniques, and applications.

Manual restoration, often referred to as traditional restoration, involves hands-on intervention in artworks by highly skilled conservators17,18 This approach relies heavily on the expert’s knowledge of art history, materials science and artistic techniques. Manual restorers use a variety of tools and materials to clean, consolidate and repair damaged artworks, addressing issues such as cracks, tears, fading, and structural weaknesses. Their work requires meticulous attention to detail, as even the smallest mistake can irreversibly alter the artwork’s appearance and value. Concurrently, hand restoration exhibits certain limitations, being a methodology that is not only time-consuming and labor-intensive but also has the potential to inflict secondary damage upon the artwork in question.

With the advancement of computer vision, digital restoration methods have gained prominence19,20. Early approaches focused on texture synthesis and regression-based techniques to predict missing image regions21. These methods were limited by low creativity and insufficient data representation7. The introduction of deep learning led to the adoption of GANs5,6, which improved the restoration quality and adaptability of the data. However, GANs face challenges such as training instability and mode collapse22. More recently, large-parameter models and multimodal architectures have opened up new possibilities in restoration tasks12,15, with vision-language models like CLIP16 contributing additional flexibility and creativity.

These developments highlight the transition from manual to algorithmic methods in artwork restoration and suggest that multimodal models can play a central role in bridging semantic and stylistic cues.

In parallel, image synthesis has emerged as a powerful tool for generating artistic content, with techniques evolving from GANs to more recent transformer- and diffusion-based models.

Until the advent of diffusion models11,12,15, GANs held sway over the realm of image synthesis tasks, with text-to-image generation being a pivotal subdomain. Since their inception7, GANs have produced images that closely resemble real-world imagery due to their reliance on Nash equilibrium and adversarial training, which presents a viable solution to the text-to-image challenge9,22,23. Scott Reed et al. were pioneers in leveraging GANs for text-to-image synthesis8, initiating the process by parsing the input description through natural language processing to instruct the generator in outputting an accurate and natural depiction of the text. The discriminator then describes and discriminates the generated image, participating in an iterative dance with the generator8. The text-conditional convolutional GAN marked the debut of image synthesis driven by text, albeit with results that were short of expectations, requiring extensive training to yield a singular outcome9. The limitation of producing a single description per result has persisted as a recurring issue in GANs.

Subsequently, Zhang H et al. introduced StackGAN23 and StackGAN++9, dividing the synthesis task into a multistage process where the first stage generates coarse results and the second stage refines these details to produce high-quality images. However, this approach introduced computational complexity, rendering training arduous and susceptible to mode collapse9,22. In contrast to GANs, Variational Autoencoders (VAEs)24, a likelihood-based generative model, possess a more refined structure and expedite image synthesis, albeit at the expense of image quality compared to GANs. Furthermore, Auto-regressive (AR) transformers24,25, which integrate CLIP16 and GPT26,27,28, demonstrate the capability to generate more intricate and superior quality images. However, this comes at the cost of increased computational complexity25.

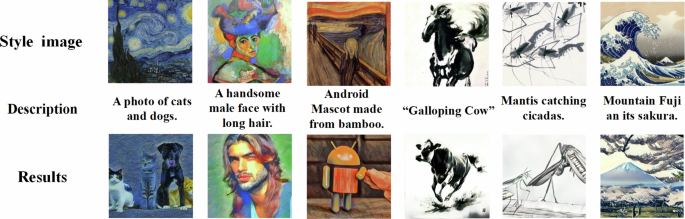

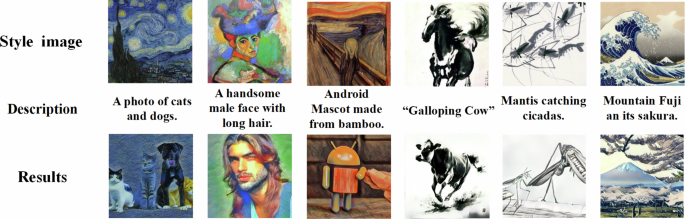

Among these advances, diffusion models have gained significant attention due to their robustness and ability to generate high-fidelity images without suffering from issues such as mode collapse Fig. 1.

The combination of content with various styles can provide a continuous stream of imagination for the artwork, and also offers more possibilities for the restoration of artistic pieces.

The Denoising Diffusion Probabilistic Model (DDPM) was introduced and adapted for text-to-image tasks by Ho et al. 10. Diffusion models, which are generative models grounded in Markov chain principles29, encompass two primary stages: a diffusion process that transitions from the original image to normally distributed noise and a reverse process that reconstructs the original image from the noise11,12,15,30,31. In particular, diffusion models are resilient to mode collapse due to their capacity to preserve the semantic structure of the data10,29.

Ramesh A et al. presented DALL.E 2, a seminal text-to-image model, which leverages the powerful language-image model CLIP16 and a guidance diffusion model known as GLIDE32. DALL.E 2 comprises two components: a prior and a decoder11. The prior transforms text embeddings into image embeddings, which are then utilized to modulate the diffusion decoder to produce the final image. Compared to DALL.E 211, Imagen12 enhances image realism by increasing the size of the language model and employs a continuous diffusion model to refine the generated image. By freezing the weights during text embedding, Imagen simplifies complex structures, reduces computational overhead, and achieves state-of-the-art results in image synthesis. However, both DALL.E 2 and Imagen require extensive training times, often measured in hundreds of GPU days. The LDM addresses this challenge by incorporating latent space processing15.

The core of diffusion-based image generation lies in feature reconstruction from noise through iterative denoising steps. However, the success of this process is contingent upon the model’s ability to extract representative features. In this context, transformer architectures-originally developed for NLP-have demonstrated superior capability in visual feature extraction, leading to their widespread adaptation in vision models such as ViT and Swin33. Motivated by this success, the field of computer vision has gradually embraced the transformer as a potential replacement for convolutional neural networks. However, initial attempts failed to yield promising results as a result of the transformer’s high computational demands. To address this, Google Labs introduced ViT (Visual Transformer)34, which replaced the traditional pixel-based visual processing unit with image patches. This innovation overcame the significant challenge of computational complexity and paved a new path for image processing tasks, enabling the exploration of novel methodologies14,35,36,37.

Despite its potential, ViT necessitates a substantial amount of data and complex computational resources. To mitigate these limitations, Liu et al. proposed the Swin Transformer14, a novel visual transformer based on a hierarchical feature map. The Swin Transformer employs a shifted window multi-head attention mechanism (SW-MSA) to significantly reduce computational complexity. Furthermore, its hierarchical structure allows for the extraction of more detailed image features, leading to superior performance in several fundamental image processing tasks14,36,37.

Our work

To achieve the task of generation of style and text-conditioned artwork, we introduce a novel network architecture termed Imagest. This network integrates input text features and style features through a sophisticated cross-attention mechanism and autoregressive processes. The Imagest architecture primarily encompasses two key modules: the LDM module and the feature prompts module. Within the feature prompts module, the text features are derived from a pre-trained CLIP model16, while the style features are obtained from a style-specific Swin encoder. A detailed illustration of our network architecture is provided in Fig. 2.

The first module, denoted in blue, is the LDM module. The second module, highlighted in orange, encompasses the style Swin encoder and the CLIP module.

Building on the latent space representations established in prior work, we now introduce the core generative backbone of our framework, which governs the transformation from textual and stylistic conditions to synthesized images.

Our LDM module builds upon prior research endeavors, notably15 and ref. 35, and comprises two fundamental components: a pre-trained autoencoder and a diffusion model. The pretrained autoencoder plays a pivotal role in facilitating perceptual image compression, thereby mitigating the computational complexities associated with high-dimensional spaces. Conversely, the diffusion model undertakes the task of image generation.

The input image is initially passed through the pre-trained autoencoder35, which then feeds its output into the diffusion model15. This sequence of operations maps the image from a high-dimensional pixel space to a more compact, low-dimensional latent space. The autoencoder, denoted as ε, is trained using a blend of perceptual loss and adversarial loss to enhance its performance. Following denoising by the diffusion model, a decoder, designated as D, is employed to reconstruct the latent space features back into a coherent image, effectively reversing the compression process executed by the encoder ε.

In the latent space, the diffusion model is segmented into two distinct phases: the diffusion process and the inverse diffusion process, as detailed in prior works10,29. The diffusion process involves transforming the training sample features into independent samples that conform to a normal distribution through the iterative addition of noise, facilitated by components such as TimeEmbedding and Residual Block10,29,38,39. Subsequently, our proposed inverse diffusion process leverages a denoising U-net block architecture to progressively refine each Gaussian-distributed sample feature, adhering to the predefined noise addition strategy for effective denoising.

In more detail, given an RGB image \(\,\text{Image}\,\in {{\mathbb{R}}}^{H\times W\times 3}\), this image first goes to the encoder ε, which will map it to the lower dimensional latent space in z = ε(Image). Subsequently, z will enter the diffusion process,after T time steps of noise addition, z turns into zT at step T. zT are satisfied:

$${z}^{T}=\sqrt{1-{\beta }_{T}}{z}^{T-1}+\sqrt{{\beta }_{T}}{y}_{T-1}$$

(1)

with βT ∈ [0, 1] being a hyperparameter of the diffusion schedule and yT−1 ~ N(0, 1) being a standard Gaussian noise. Due to the known noise addition strategy, for each step T, zT can be calculated iteratively, which will be used to optimize the diffusion model.

Next, the standard Gaussian sample zT is refined and denoised, and in each denoising step, we use a denoising U-net \({y}_{\theta }\left(T,{z}^{T}\right)\)37, The optimization of each iteration should be:

$$L={{\mathbb{E}}}_{\varepsilon \text{(image),}{y}_{T-1} \sim N(0,1),T}\left[{\left\Vert y-{y}_{\theta }\left({z}^{T},T\right)\right\Vert }_{2}^{2}\right]$$

(2)

LDM possesses the capability to incorporate conditional constraints, such as image-based and text-based constraints, by leveraging the cross-attention mechanism embedded within the denoising U-Net architecture. Our research focuses on refining and composing images through the fine-tuning of stable diffusion 1.4. Specifically, during this process, we freeze the parameters associated with the noise addition component. Meanwhile, text and style cues are introduced as conditional inputs via the cross-attention mechanism. Additionally, we incorporate fixed-style features by training a style extractor in conjunction with the denoising U-Net. Ultimately, the newly generated image is reconstructed using a pre-trained decoder from latent space, yielding the desired final result.

To effectively incorporate both visual style and textual semantics, we developed a dual-branch conditioning module. The following section outlines the strategies employed to extract and align these conditioning signals.

Given a style image \(\,\text{Image}\,\in {{\mathbb{R}}}^{H\times W\times 3}\) and a content text that delineates the specific content requirements, our objective is to utilize the style image to render an image that corresponds to the described text, thereby generating a distinctive artwork. To accomplish this task of style and text-conditioned image generation within a feasible amount of training data and computational time, we initially leverage the pretrained CLIP16 to generate text embeddings \({e}_{crt}\in {{\mathbb{R}}}^{{T}_{x}\times {d}_{t}}\), where Tx represents the tokens derived from the content requirements text, and dt denotes the dimension of the token embeddings. Subsequently, we extract style sequences through our specially designed Style Swin Encoder. The architecture of our Style Swin Encoder is illustrated in Fig. 3.

The Style Swin Encoder processes patch-partitioned style images through four stages of non-shifted Swin Transformer Blocks, removing the original shifted-window self-attention to reduce computation and avoid unwanted global semantic mixing in style representation.

For capturing style-specific representations, we adopt a lightweight transformer-based encoder. This design balances expressiveness and efficiency, making it well-suited to our application domain.

In most diffusion-based conditional generation frameworks, Vision Transformers (ViT) are commonly used as image encoders due to their global receptive field and strong feature extraction capabilities16,40. However, for high-resolution image inputs, ViT incurs substantial computational cost due to its quadratic complexity with respect to spatial dimensions. To address this, we adopt the Swin Transformer14 as our style encoder.

Swin Transformer introduces a hierarchical structure with shifted window-based attention, which significantly reduces computational complexity while maintaining a strong local feature learning capability. According to the analysis in ref. 14, the theoretical floating point operations (FLOPs) of the Swin Transformer are substantially lower than those of ViT under high-resolution inputs. For example, in our task, when processing a 256 × 256 image:

This represents a reduction of nearly 75% in the computation, making the Swin Transformer more suitable for our application scenario, where efficient but expressive style encoding is essential. Furthermore, we simplify the original Swin architecture by removing window-shift operations and reducing attention depth, resulting in additional computational savings without significantly affecting representation quality.

To further reduce the computational overhead and tailor the encoder for style representation rather than dense semantic modeling, we simplify the original Swin architecture in two key ways. First, we remove the shifted window mechanism, which is mainly designed to enhance long-range dependency modeling but introduces additional complexity and memory operations. Second, instead of using relative position bias with cyclic shift, we adopt a simpler absolute deviation-based positional encoding scheme. This not only improves training stability but also leads to faster inference with negligible impact on style representation performance.

Our Style Swin Encoder comprises four Swin stages, with each stage containing two Swin Transformer blocks and one Patch Merging block. Since the extracted features are low-dimensional style features, the concern of information exchange between different windows is mitigated, allowing for the adoption of a simpler window attention mechanism. Specifically, an RGB pixel image \(\,\text{Image}\,\in {{\mathbb{R}}}^{H\times W\times 3}\) is fed into the Patch Partition layer, where it is flattened in the channel direction to obtain image patches. The size of each image patch is 4 × 4, and the feature dimension of the image patch is 48 (resulting from 4 × 4 × 3). Consequently, the dimension of the image is transformed from H × W × 3 to \(\frac{H}{4}\times \frac{W}{4}\times 48\) after passing through the Patch Partition layer. Following this, a linear embedding layer14 is applied to this initial feature map, projecting it to an arbitrary dimension (denoted as C). As a result, the dimension of the feature map of the image becomes \(\frac{H}{4}\times \frac{W}{4}\times C\), and a linear sequence ZS of feature map styles is obtained.

Subsequently, the sequence ZS is fed into a sequence of two contiguous Swin-Transformer blocks. A Swin Transformer block primarily comprises a window-based multi-head self-attention mechanism (W-MSA) followed by a multi-layer perceptron (MLP). Notably, a linear normalization layer precedes both the W-MSA and the MLP components. As a result, the combination of the initial linear embedding layer and the subsequent contiguous double Swin Transformer block constitutes the first encoding stage of the Swin Transformer. During this encoding process, the sequences are transformed into query matrix Q, key matrix K, and value matrix V for the computation of the attention mechanism, as follows:

$$Q={Z}_{S}{W}_{Q},\quad K={Z}_{S}{W}_{K},\quad V={Z}_{S}{W}_{V}$$

(3)

Here, WQ, WK, and WV belong to the space \({R}^{C\times {d}_{{\rm{head}}}}\), where \({d}_{{\rm{head}}}=\frac{C}{N}\), and N denotes the number of heads in the multi-head attention mechanism. The attention mechanism, which incorporates relative position encoding via a bias term \(B\in {{\mathbb{R}}}^{{M}^{2}\times {M}^{2}}\), is calculated as:

$$\,\text{Attention}(Q,K,V)=\text{SoftMax}\,\left(\frac{Q{K}^{T}}{\sqrt{d}}+B\right)V$$

(4)

where d is the dimension of Q/K, without considering the issue of information exchange between different windows. Next, the input style sequence is entered into a new Swin Transformer block and computed:

$$\begin{array}{l}{\hat{z}}^{l}=WMSA\left(LN\left({z}^{l-1}\right)\right)+{z}^{l-1},\\ {z}^{l}=MLP\left(LN\left({\hat{z}}^{l}\right)\right)+{\hat{z}}^{l}\end{array}$$

(5)

Consequently, we obtained the final style sequence Ys−final passing through the entire style Swin encoder.

After all adding noise processes, we use cross attention to introduce the style feature to latent space in denoising Unet decoder, precisely, this method generates key matrix(K) and value matrix(V) using style sequences, while Q remains unchanged:

$$Q={Y}_{C}{W}_{Q},K={Y}_{S}{W}_{K},V={Y}_{S}{W}_{V}$$

(6)

To extract semantic cues from natural language, we leverage a pre-trained vision-language model. This allows textual instructions to guide image synthesis in a highly controllable and interpretable manner.

CLIP stands as a formidable vision-language model, having been established as a central conduit for text-based image generation tasks16. While the T5 model, employed within the Imagen framework, enhances the comprehension of textual content, it encounters challenges in reconstructing image features that align seamlessly with the given description. Furthermore, T5 struggles to grasp the semantics that underpin image layouts40. Conversely, pre-trained CLIP boasts an extensive data repository. Consequently, we opt for pre-trained CLIP16 as our prompt condition encoder. In detail, we procure text embeddings through a pre-trained CLIP model, which are subsequently fed into a transformer to obtain a latent encoding. This latent encoding is then mapped to the LDM utilizing the cross-attention mechanism within a designated window.

link